Using Coreference Resolution and Topic Modeling to Identify Land Conflicts and Its Causes

Improving topic modeling performance through coreference resolution to find mediating policies for land conflicts in India.

May 11, 2020

3 minutes read

Identifying environmental conflict events in India using news media articles

Part of this project was to scrape news media articles to identify environmental conflict events such as resource conflicts, land appropriation, human-wildlife conflict, and supply chain issues.

With an initial focus on India, we also connected conflict events to their jurisdictional policies to identify how to resolve those conflicts faster or to identify a gap in legislation.

Part of the pipeline in building this Language Model was a semi-supervised attempt in order to be Improving Topic Modeling Performance to increase environmental sustainability, whose process and the outcome are available here.

In short, in order to make this Topic Modeling model robust, Coreference Resolution was suggested as one of the possible additions.

The Solution

What exactly is Coreference Resolution?

Coreference resolution is the task of finding all expressions that refer to the same entity in a text (1)

Use Cases

- In the context of this project, Coreference Resolution could be best used in order to Improving Topic Modeling Performance by replacing references with the same entity in order to better model the actual meaning of the text. This increases the Tf-Idf of generalized entities and it removes ambiguous words that are meaningless for classification.

- Another use-case would be to use the Coreferenced text data as additional features, along with Named Entity Recognition tags, in any classification approach. A one-hot-encoded version of unique entities can be used as input to factorization machines or other approaches for spare modeling.

Which packages are available to implement it?

Exploring almost every available python package out there.

We toyed around with some packages which seemed good in theory but were rather challenging to apply to our specific task. We needed a package that would be user-friendly, as a script would have to be developed for 28 people to take and be able to apply without much struggle.

NeuralCoref, Stanford NLP, Apache Open NLP, and Allennlp. After trying out each package, I personally preferred Allennlp, but as a team, we decided to use NeuralCoref with a short but effective script written by one of the collaborators Srijha Kalyan.

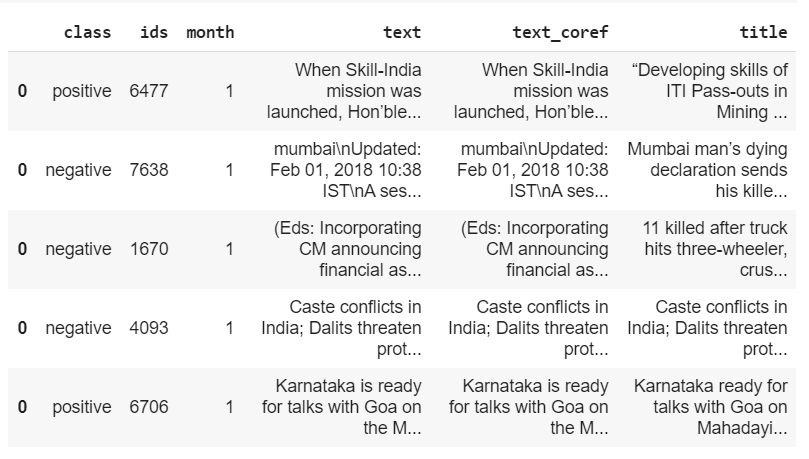

The code was applied to the article data which was annotated by fellow collaborators from the Annotation Task Group. This resulted in a CSV file with the original article titles, the original article text, and a new column of Coreference article text; not as chains but in the same written format as the original article text.

The output was then sent to the Topic Modeling Task Team, which at that point was sitting on an accuracy of 83%, with the Coreference Resolution data, the accuracy jumped to 93%.

That’s an 11% improvement! All the hard work and hours were clearly worth it!

You might also like

- Using Topic Modelling and NLP Uncovering Infrastructural Needs

- 4 Steps of Using Latent Dirichlet Allocation for Topic Modeling in NLP